Table of Contents

- Executive Summary

- Introduction

- A Tale of Two Algorithms

- Russian Interference, Radicalization, and Dishonest Ads: What Makes Them So Powerful?

- Algorithmic Transparency: Peeking Into the Black Box

- Who Gets Targeted—Or Excluded—By Ad Systems?

- When Ad Targeting Meets the 2020 Election

- Regulatory Challenges: A Free Speech Problem—and a Tech Problem

- So What Should Companies Do?

- Key Transparency Recommendations for Content Shaping and Moderation

- Conclusion

So What Should Companies Do?

What would a better framework, one that puts democracy, civil liberties, and human rights above corporate profits, look like?

It is worth noting that Section 230 stipulates that companies are protected from liability in taking “any action voluntarily taken in good faith” to enforce its rules. Section 230 doesn’t provide any guidance as to what “good faith” actually means for how companies should govern their platforms.1 Some legal scholars have proposed reforms that would keep Section 230 in place but would clarify steps that companies need to take in order to demonstrate good-faith efforts to mitigate harm, in order to remain exempt from liability.2

Companies hoping to convince lawmakers not to abolish or drastically change Section 230 would be well advised to proactively and voluntarily implement a number of policies and practices to increase transparency and accountability. This would help to mitigate real harms that users or communities can experience when social media is used by malicious or powerful actors to violate their rights.

First, companies’ speech rules must be clearly explained and consistent with established human rights standards for freedom of expression. Second, these rules must be enforced fairly according to a transparent process. Third, people whose speech is restricted must have an opportunity to appeal. And finally, the company must regularly publish transparency reports with detailed information about the steps that the company takes to enforce its rules.3

Since 2015, RDR has encouraged internet and telecommunications companies to publish basic disclosures about their policies and practices that affect their users’ rights. Our annual benchmarks major global companies against each other and against standards grounded in international human rights law.

Much of our work measures companies’ transparency about the policies and processes that shape users’ experiences on their platforms. We have found that—absent a regulatory agency empowered to verify that companies are conducting due diligence and acting on it—transparency is the best accountability tool at our disposal. Once companies are on the record describing their policies and practices, journalists and researchers can investigate whether they are actually telling the truth.

Transparency allows journalists, researchers, and the public and their elected representatives to make better informed decisions about the content they receive and to hold companies accountable.

We believe that platforms have the responsibility to set and enforce the ground rules for user-generated and ad content on their services. These rules should be grounded in international human rights law, which provides a framework for balancing the competing rights and interests of the various parties involved.4 Operating in a manner consistent with international human rights law will also strengthen the U.S.’ long-standing bipartisan policy of promoting a free and open global internet.

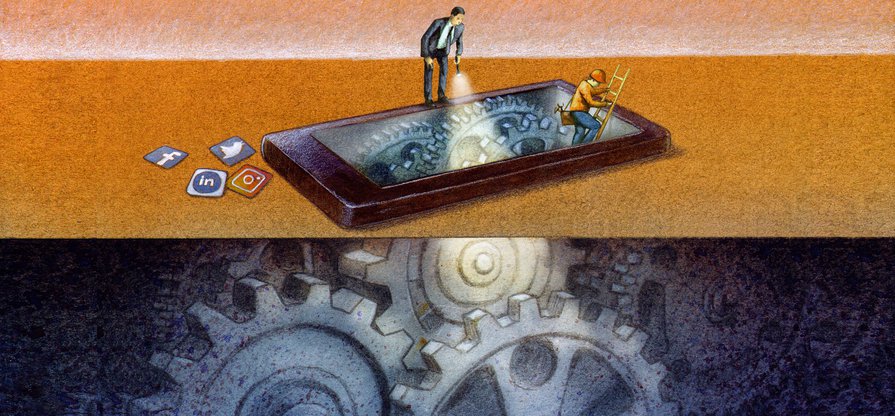

But again, content is only part of the equation. Companies must take steps to publicly disclose the different technological systems at play: the content-shaping algorithms that determine what user-generated content users see, and the ad-targeting systems that determine who can pay to influence them. Specifically, companies should explain the purpose of their content-shaping algorithms and the variables that influence them so that users can understand the forces that cause certain kinds of content to proliferate, and other kinds to disappear.5 Currently, companies are not transparent or accountable for how their targeted-advertising policies and practices and their use of automation shape the online public sphere by determining the content and information that internet users receive.6

Companies also need to publish their rules for ad targeting, and be held accountable for enforcing those rules. Our research shows that while Facebook, Google, and Twitter all publish ad-targeting rules that list broad audience categories that advertisers are prohibited from using, the categories themselves can be excessively vague and unclear—Twitter for instance bans advertisers from using audience categories “that we consider sensitive or are prohibited by law, such as race, religion, politics, sex life, or health."7 Nor do these platforms disclose any data about the number of ads they removed for violating their ad-targeting rules (or other actions they took).8

Facebook says that advertisers can target ads to custom audiences, but prohibits them from using targeting options “to discriminate against, harass, provoke, or disparage users or to engage in predatory advertising practices.” However, not everyone can see what these custom audience options are, since these are only available to Facebook users. And Facebook publishes no data about the number of ads removed for breaching ad-targeting rules.

Platforms should set and publish rules for targeting parameters, which should apply equally to all ads—a practice like this would make it much more difficult for companies to violate anti-discrimination laws like the Fair Housing Act. Moreover, once an advertiser has chosen their targeting parameters, companies should refrain from further optimizing ads for distribution, as this may lead to further discrimination.9

Platforms should not differentiate between commercial, political, and issue ads, for the simple reason that drawing such lines fairly, consistently, and at a global scale is impossible and complicates the issue of targeting.

Eliminating targeting practices that exploit individual internet users’ characteristics (real or assumed) would protect privacy, reduce filter bubbles, and make it harder for political advertisers to send different messages to different constituent groups.

Limiting targeting, as Federal Elections Commissioner Ellen Weintraub has argued,10 is a much better approach, though here again, the same rules should apply for all types of ads. Eliminating targeting practices that exploit individual internet users’ characteristics (real or assumed) would protect privacy, reduce filter bubbles, and make it harder for political advertisers to send different messages to different constituent groups. This is the kind of reform that will be addressed in the second part of this report series.

In addition, companies should conduct due diligence through human rights impact assessments on all aspects of what their rules are, how they are enforced and what steps the company takes to prevent violations of users’ rights. This process forces companies to anticipate worst case scenarios, and change their plans accordingly, rather than simply rolling out new products or entering new markets and hoping for the best.11 A robust practice like this could reduce or eliminate some of the phenomena described above, ranging from the proliferation of election-related disinformation to YouTube’s tendency to recommend extreme content to unsuspecting users.

All systems are prone to error, and content moderation processes are no exception. Platform users should have access to timely and fair appeals processes to contest a platform’s decision to remove or restrict their content. While the details of individual enforcement actions should be kept private, transparency reporting provides essential insight into how the company is addressing the challenges of the day. Facebook, Google, Microsoft, and Twitter have finally started to do so,12 though their disclosures could be much more specific and comprehensive.13 Notably, they should include data about the enforcement of ad content and targeting rules.

Our complete transparency and accountability standards can be found on our website. Key transparency recommendations for content shaping and content moderation are presented in the next section.

Citations

- Kosseff, Jeff. 2019. The Twenty-Six Words That Created the Internet. Ithaca: Cornell University Press.

- Citron, Danielle Keats, and Benjamin Wittes. 2017. “The Internet Will Not Break: Denying Bad Samaritans §230 Immunity.” Fordham Law Review 86(2): 401–23.

- See also PĂrková, Eliška, and Javier Pallero. 2020. 26 Recommendations on Content Governance: A Guide for Lawmakers, Regulators, and Company Policy Makers. Access Now.

- Kaye, David. 2019. Speech Police: The Global Struggle to Govern the Internet. New York: Columbia Global Reports.

- Singh, Spandana. 2019. Everything in Moderation: An Analysis of How Internet Platforms Are Using Artificial Intelligence to Moderate User-Generated Content. Washington D.C.: Â鶹ąű¶ł´«Ă˝â€™s Open Technology Institute. source; Singh, Spandana. 2019. Rising Through the Ranks: How Algorithms Rank and Curate Content in Search Results and on News Feeds. Washington D.C.: Â鶹ąű¶ł´«Ă˝â€™s Open Technology Institute. source

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems – Pilot Study and Lessons Learned. Washington D.C.: Â鶹ąű¶ł´«Ă˝.

- Twitter. 2020. “Privacy Policy.” (Accessed on February 20, 2020).

- Ranking Digital Rights. 2020. The RDR Corporate Accountability Index: Transparency and Accountability Standards for Targeted Advertising and Algorithmic Systems – Pilot Study and Lessons Learned. Washington D.C.: Â鶹ąű¶ł´«Ă˝.

- Ali, Muhammad et al. 2019. “Discrimination through Optimization: How Facebook’s Ad Delivery Can Lead to Biased Outcomes.” Proceedings of the ACM on Human-Computer Interaction 3(CSCW): 1–30.

- Weintraub, Ellen L. 2019. “Don’t Abolish Political Ads on Social Media. Stop Microtargeting.” Washington Post.

- Allison-Hope, Dunstan. 2020. “Human Rights Assessments in the Decisive Decade: Applying UNGPs in the Technology Sector.” Business for Social Responsibility.

- Ranking Digital Rights. 2019. Corporate Accountability Index. Washington, DC: Â鶹ąű¶ł´«Ă˝.

- In particular, Microsoft only reports requests from individuals to remove nonconsensual pornography, also referred to as “revenge porn,” which is the sharing of nude or sexually explicit photos or videos online without an individual’s consent. See “Content Removal Requests Report – Microsoft Corporate Social Responsibility.” Microsoft.