A Chapter of: Digital Deceit II

Transparency

An important part of the disinformation problem is driven by the opacity of its operations and the asymmetry of knowledge between the platform and the user. The starting point for reform is to rein in the abuses of political advertising. Ad-driven disinformation flourishes because of the public’s limited understanding of where paid political advertising comes from, who funds it, and most critically, how it is targeted at specific users. Even the moderately effective disclosure rules that apply to traditional media do not cover digital ads. It is time for the government to close this destructive loophole and shape a robust political ad transparency policy for digital media. These reforms should accompany a broader “platform transparency” agenda that includes revisiting the viability of the traditional “notice and consent” system in this era of trillion dollar companies, exposing non-human online accounts, and developing a regime of auditing for the social impact of algorithms that affect the lives of millions with automated decisions.

Political Ad Transparency

The lowest hanging fruit for policy change to address the current crisis in digital disinformation is to increase transparency in online political advertising. Currently, the law requires that broadcast, cable and satellite media channels that carry political advertising must ensure that a disclaimer appears on the ad that indicates who paid for it.1 Online advertisements, although they represent an increasingly large percentage of political ad spending, do not carry this requirement. A 2014 Federal Election Commission decision on this issue concluded that the physical size of digital ads was simply too small for it to be feasible to add the disclaimer.2 And even if they had applied the rule, it would only have applied to a narrow category of paid political communications. As a result, Americans have no ability to judge accurately who is trying to influence them with digital political advertising.

The effect of this loophole in the law is malignant to democracy. The information operation conducted by a group of Russian government operatives during the 2016 election cycle made extensive use of online advertising to target American voters with deceptive communications. According to Facebook’s internal analysis, these communications reached 126 million Americans with a modicum of funding.3 In response, the Department of Justice filed criminal charges against 13 Russians early this year.4 If the law required greater transparency into the sources of political advertising and the labeling of paid political content, these illegal efforts to influence the U.S. election could have been spotted and eliminated before they could reach their intended audiences.

But the problem is much larger than nefarious foreign actors. There are many other players in this market seeking to leverage online advertising to disrupt and undermine the integrity of democratic discourse for many different reasons. The money spent by the Russian agents was a drop in the bucket of overall online political ad spending during the 2016 election cycle. The total online ad spending for political candidates alone in the 2016 cycle was $1.4 billion, up almost 800 percent from 2012, an amount roughly the same as that candidates spent on cable television ads (which do require funding disclosures).5 The total amount of money spent on unreported political ads (e.g. issue ads sponsored by companies, unions, advocacy organizations, or PACs that do not mention a candidate or party) is quite possibly considerably higher. Only the companies that sold the ad space could calculate the true scope of the political digital influence market, because there is no public record of these ephemeral ad placements. We simply do not know how big the problem may be.

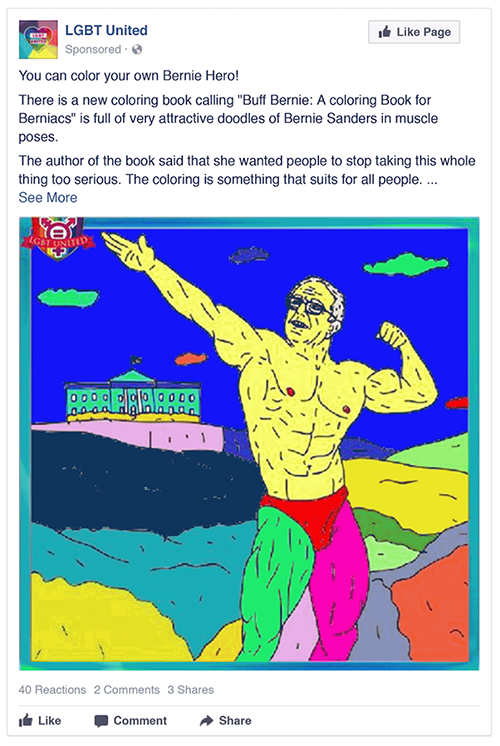

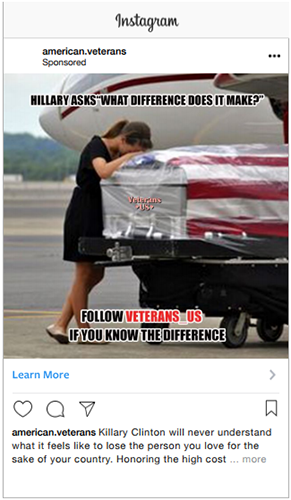

Examples of Russian disinformation on Facebook and Instagram in the lead-up to the 2016 presidential election

These ads were among those the House Intelligence Committee in November 2017.

Posted on: LBGT United group on Facebook

Created: March 2016

Targeted: People ages 18 to 65+ in the United States who like “LGBT United”

Results: 848 impressions, 54 clicks

Ad spend: 111.49 rubles ($1.92)

Posted on: Instagram

Created: April 2016

Targeted: People ages 13 to 65+ who are interested in the tea party or Donald Trump

Results: 108,433 impressions, 857 clicks

Ad spend: 17,306 rubles ($297)

Posted on: Facebook

Created: October 2016

Targeted: People age 18 to 65+ interested in Christianity, Jesus, God, Ron Paul and media personalities such as Laura Ingraham, Rush Limbaugh, Bill O’Reilly and Mike Savage, among other topics

Results: 71 impressions, 14 clicks

Ad spend: 64 rubles ($1.10)

Posted on: Instagram

Created: August 2016

Targeted: People ages 18 to 65+ interested in military veterans, including those from the Iraq, Afghanistan and Vietnam wars

Results: 17,654 impressions, 517 clicks

Ad spend: (3,083 rubles) $53

What we do know is that none of these ads carried the level of transparency necessary for voters to have a clear understanding of who sought to influence their views and for what reason. Many online ads actively seek to cloak their origins and strategic aims. They are typically targeted at select groups of users. And unlike targeting in the television market, these messages are not publicly available—they are only visible to the target audience in the moment of ad delivery and then they disappear. (The recent introduction of ad transparency databases from Facebook and Twitter have changed this, but for most users, their experience with political ads remains similar.) And they are published on digital platforms by the millions. The special features of digital advertising that make it so popular—algorithmic targeting and content customization—make it possible to test thousands of different ad variations per day with thousands upon thousands of different configurations of audience demographics.6 Political advertisers can very easily send very different (and even contradictory) messages to different audiences. Until very recently, they need not have feared exposure and consequent public embarrassment.

Because of these unique targeting features, the consequences of opacity in digital ads are far worse than traditional media channels. For that reason, the objective of policy reform to increase transparency in online political advertising must seek to move beyond simply achieving equality between the regulation of traditional and new media channels. Online ads require a higher standard in order to achieve the same level of public transparency and disclosure about the origins and aims of advertisers that seek to influence democratic processes. We should aim not for regulatory parity but for outcome parity.

Ad-driven disinformation flourishes because of the public’s limited understanding of where paid political advertising comes from, who funds it, and most critically, how it is targeted at specific users

We applaud the efforts of congressional leaders to move a bipartisan bill—the Honest Ads Act—that would represent significant progress on this issue.7 And we are glad to see that public pressure has reversed the initial opposition of technology companies to this legislative reform. The significant steps that Google,8 Facebook,9 and Twitter10 have pledged to take in the way of self-regulation to disclose more information about advertising are important corporate policy changes. However, these efforts should be backstopped with clear rules and brought to their full potential through enforcement actions in cases of noncompliance.

Even then, the combination of self-regulation and current legislative proposals does not go far enough to contain the problem. None of the major platforms transparency products — the Facebook Ad Archive,11 Google's Political Ad Transparency Report, and Twitter’s Ad Transparency Center12 — make available the full context of targeting parameters that resulted in a particular ad reaching a particular user. Google and Twitter limit transparency to a very narrow category of political ads, and both offer far less information than Facebook (though you must be logged into a Facebook account to see the Facebook data). None of this transparency is available in any market other than the United States (with the exception of Brazil, where Facebook recently implemented the ad transparency center in advance of October 2018 elections). The appearance of these ad transparency products signals an important step forward for the companies, but it also exposes the gap between what we have and what we need.

There are various methods we might use to achieve an optimal outcome for ad transparency. In our view, the ideal solution should feature five components. These are drawn from our own analysis and favorable readings of ideas suggested by various commentators and experts,13 as well as the strong foundation of the bipartisan Honest Ads Act.

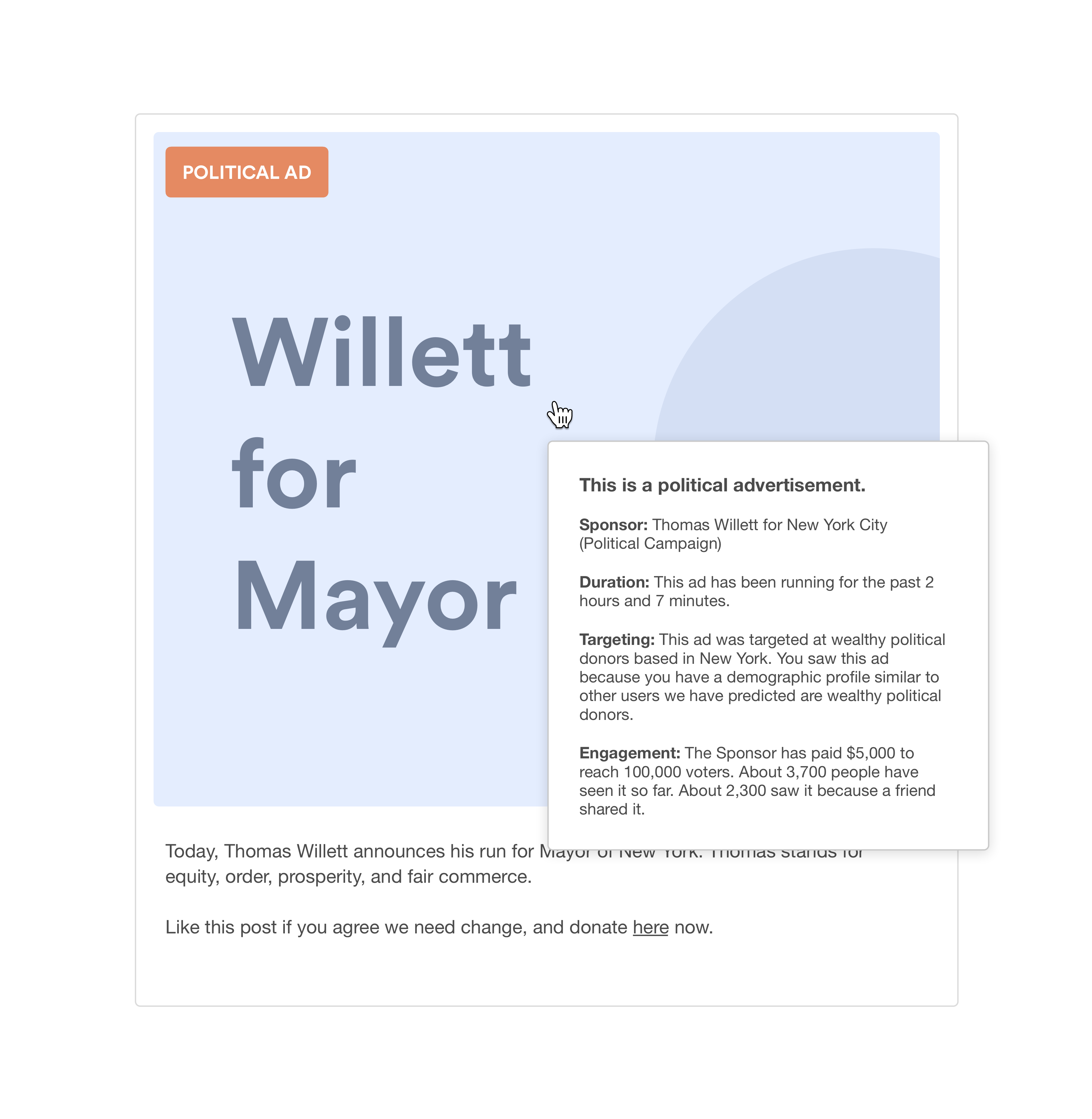

- Clear On-Screen Designation: All political ads that appear in social media streams should be clearly marked with a consistent designation, such as a bright red box that is labeled “Political Ad” in bold white text, or bold red text in the subtitle of a video ad. Further, there should be strict size requirements on these disclosures, for instance that they should occupy at least five to ten percent of the space of the advertisement. Too often in digital media streams, the markings on paid content are so unobtrusive that users may overlook the designation.

- Real-Time Disclosure in the Ad: Clear information about the ad should be pushed to the user in the payload of the ad at the same time that the ad renders (e.g. a pop-up box triggered on cursor hovers for textual and image ads, or subtitle text for video ads). It is not enough for this information to be available somewhere else on the internet, or to require active click-through to enable access to this information. The following data points should be included:

- Sponsor of the ad: The name of the organization that paid to place the ad, the total amount it spent to place the ad, and a list of its disclosed donors;

- Time and audience targeting parameters: The time period over which the ad is running, the demographic characteristics selected by the advertiser for targeting of the ad, the organization whose custom target list the recipient belongs in (if applicable), and the demographic characteristics that indicate why the recipient was included in the target list (including platform-determined “look-alike” ascriptions, if applicable);

- Engagement metrics: The number of user impressions for which the ad buyer has paid to reach with the present ad, and the number of active engagements by users.

- Open API to Download Ad Data: All of the information in the real-time disclosure for each ad should be compiled and stored by digital advertising platforms in machine readable, searchable databases available through an open application programming interface (API). If the ad mentions a candidate, political party, ballot measure or clear electoral issue, that should be logged. In addition, this database should include the complete figures on engagement metrics, including the total number of user engagements and the total number of ad impressions (paid and unpaid). This data should be available online for public review at all times and for all advertisers over a low minimum threshold of total ad spending per year.

- Financial Accountability: Donors for political ad spending over a minimum threshold should be reported by advertisers to election authorities, listing the provenance of funds as a form of campaign finance—including the major donors. Political advertisers should be required by advertising platforms to submit evidence of this reporting prior to a second political ad buy.

- Advertiser Verification: Election authorities should impose “know your customer” rules that require digital ad platforms that cross a minimum level of ad spending to verify the identity of political advertisers and take all reasonable measures to prevent foreign nationals from attempting to influence elections.

Underneath all of these provisions, we need to take care to get the definitions right and to recognize the scale and complexity of the digital ad market compared to traditional media. Current transparency rules governing political ads are triggered under limited circumstances—in particular, those that mention a candidate or political party and that are transmitted within a certain time period prior to the election. These limits must be abandoned (or dramatically reconsidered) in recognition of the scope and complexity of paid digital communications, the prevalence of the issue ad versus a candidate ad,14 and the nature of the permanent campaign that characterizes contemporary American politics. If these are the parameters, it becomes clear why all ads must be captured in a searchable, machine-readable database with an API that is accessible to researchers and journalists that have a public service mission to make sense of the influence business on digital media.

The Honest Ads Act would achieve some of these objectives.15 The proposed legislation extends current laws requiring disclaimers on political advertising in traditional media to include digital advertising. It requires a machine readable database of digital ads that includes the content of the ad, description of the audience (targeting parameters), rate charged for the ad, name of candidate, office, or election issue mentioned in the ad and contact information of the person that bought the ad. And it requires all advertising channels (including digital) to take all reasonable efforts to prevent foreign nationals from attempting to influence elections. Even FEC bureaucrats may get in on the action with their (admittedly tepid) proposed rules to govern digital ad disclaimers.16

As public pressure builds in the run up to the 2018 elections, we may well see additional measures piled onto this list. Notably, a recent British Parliamentary report calls for a ban on micro-targeting political ads using Facebook’s “lookalike” audiences, as well as a minimum number of individuals that all political ads must reach.17

The combination of self-regulation and current legislative proposals does not go far enough to contain the problem.

We favor a system that would push this kind of disclosure for political ads as quickly as possible to guard fast-approaching elections against exploitation. We acknowledge that defining “political” will always carry controversy. However, Facebook’s current definition—“Relates to any national legislative issue of public importance in any place where the ad is being run”—is a good start.18 They offer a list of issues19 covered under this umbrella (though only for the United States at present). These categories could be a lot more difficult to maintain globally and over a long period of time. Consequently, we expect these measures will ultimately be extended to all ads, regardless of topic or target. This will also result in a cleaner policy for companies that do not have the resources that Facebook can bring to the problem.

But even in the potential future world of a total ad transparency policy, a method of flagging which ads fall into the category of political communications would be preferred in order to signal that voters should pay attention to the origin and aims of those ads in particular. Of course, we are mindful of the significant constitutional questions raised by these kinds of disclosure requirements. We welcome that discussion as a means to hone the policy to the most precise tool for serving the public interest without unduly limiting individual freedoms. A full analysis of First Amendment jurisprudence is beyond the scope of this report, but we believe proposals like this will withstand judicial scrutiny.

Getting political ad transparency right in American law is not only a critical domestic priority, it is one that has global implications, because the leading internet companies will wish to extend whatever policies are applied here happens here to the rest of the world so as to maintain platform consistency. This is an incentive to get the “gold standard” right, particularly under American law that holds a high bar of protection for free expression. But it also raises questions about how we might anticipate problems that might arise in the international context. For example, there is a strong argument that advertisers that promote controversial social or political issues at a low level of total spending (indicating civic activism rather than an organized political operation) should be shielded from total transparency in order to protect them from persecution. We could contemplate a safe-harbor for certain kinds of low-spending advertisers, particularly individuals, in order to balance privacy rights against the public interest goals of transparency.

Our view is that online ad transparency is a necessary but far from sufficient condition for addressing the current crisis in digital disinformation. Transparency is only a partial solution, and we should not overstate what it can accomplish on its own. But we should try to maximize its impact by requiring transparency to be overt and real-time rather than filed in a database sometime after the fact. To put it simply, if all we get is a database of ad information somewhere on the internet that few voters ever have cause or interest to access, then we have failed. We strongly believe contextual notification is necessary—disclosure that is embedded in the ad itself that goes beyond basic labelling. And this message must include the targeting selectors that explain to the user why she got the ad. This is the digital equivalent of the now ubiquitous candidate voice-over, “I approved this ad.” Armed with this data, voters will have a signal to pay critical attention, and they will have a chance to judge the origins, aims, and relevance of the ad.

Platform Transparency

Building on the principle that increased transparency translates directly into citizen and consumer empowerment, we believe a number of other proposals are worthy of serious consideration in this field. These include exposing the presence of automated accounts on digital media, developing systems to audit the social impact of algorithmic decision-making that affects the public interest, and reforming the “notice and consent” regime in terms of service that rely on the dubious assumption that consumers have understood (or have a choice in) what they have agreed to.

First, we find the so-called “Blade Runner” law a compelling idea (and not just a clever title).20 This measure would prohibit automated channels in digital media (including Twitter handles) from presenting themselves as human users to other readers or audiences. In effect, bots would have to be labelled as bots—either by the users that create them or by the platform that hosts the accounts. A bill with this intent has been moving in the California legislature.21 There are different ways to do this, including through a regime that applies a less onerous restriction on accounts that are human-operated but which communicate based on a transparent but automated time-table.

The Blade Runner law would give digital media audiences a much clearer picture of how many automated accounts populate online media platforms and begin to shift the norms of consumption towards more trusted content. Such transparency measures would not necessarily stigmatize all automated content. Clearly labelled bots that provide a useful service (such as a journalistic organization tweeting out breaking news alerts or a weather service indicating that a storm is approaching) would be recognized and accepted for what they are. But the nefarious activities of bot armies posing as humans would be undermined and probably these efforts would shift to some other tactic as efficacy declined. We are sensitive to the critique of this proposal as chilling to certain kinds of free expression that rely on automation. We would suggest ways that users can whitelist certain kinds of automated traffic on an opt-in basis. But the overall public benefit of transparency to defend against bot-driven media is clear and desirable.

The Blade Runner law would give digital media audiences a much clearer picture of how many automated accounts populate online media platforms and begin to shift the norms of consumption towards more trusted content.

Second, we see the increasing importance of establishing new systems for social impact oversight or auditing of algorithmic decision-making. The increasing prominence of AI and machine learning algorithms in the tracking-and-targeting data economy has raised alarm bells in the research community, in certain parts of industry, and among policymakers.22 These technologies have enormous potential for good, including applications for healthcare diagnostics, reducing greenhouse gas emissions, and improving transportation safety. But they may also cause and perpetuate serious public interest harms by reinforcing social inequalities, polarizing an already divisive political culture, and stigmatizing already marginalized minority communities.

It is therefore critical to apply independent auditing to automated decision-making systems that have the potential for high social impact. The research community has already begun to develop such frameworks.23 These are particularly urgent for public sector uses of AI—not an inconsiderable practice given U.S. government R&D spending and activity.24 And there are a few preliminary regulatory approaches to overseeing the private sector worth watching—including the GDPR provision that gives users the right to opt out of decision-making that is driven solely by automated methods.25 These new oversight techniques would be designed to evaluate how and whether automated decisions infringe on existing rights, or should be subject to existing anti-discrimination or anti-competitive practices laws.

The idea of independent review of algorithmic social impact is not a radical proposal. There are clear precedents in U.S. oversight of large technology companies. In the FTC’s consent order settled with Facebook in 2011, the agency required that Facebook submit to external auditing of its privacy policies and practices to ensure compliance with the agreement. In light of recent events that have revealed major privacy policy scandals at Facebook in spite of this oversight, many have rightly criticized the third-party audits of Facebook as ineffective. But one failure is not a reason to abandon the regulatory tool altogether; it should instead serve as an invitation to strengthen it.

Consider the possibility of a team of expert auditors (which might include at least one specialist from a federal regulatory agency working alongside contractors) regularly reviewing advanced algorithmic technologies deployed by companies that have substantial bearing on public interest outcomes. The idea here is not a simple code review; that can rarely provide much insight in the complexity of AI.26 Rather, this type of audit should be designed with considerably more rigor, examining data used to train those algorithms and the potential for bias in the assumptions and analogies they draw upon. This would permit auditors to run controlled experiments over time to determine if the commercial algorithms subject to their review are producing unintended consequences that harm the public. These kinds of ideas are new and untested—but once upon a time, so too were the wild-eyed notions of independent testing of pharmaceuticals and the random inspection of food safety. Industry and civil society have already begun to work together in projects like the Partnership on AI to identify standards around fairness, transparency and accountability.27

One failure is not a reason to abandon the regulatory tool altogether; it should instead serve as an invitation to strengthen it.

Finally, as we begin to consider new rules for digital ad transparency, we should take the opportunity to revisit the larger questions about transparency between consumers and digital service providers. Our entire system of market information—which was never particularly good at keeping consumers meaningfully informed in comparison with service providers—is on the brink of total failure. As we move deeper into the age of AI and machine learning, this situation is going to get worse. The entire concept of “notice and consent” – the notion that a digital platform can tell consumers about what personal data will be collected and monetized and then receive affirmative approval—is rapidly breaking down. The intransparency in how consumer data collection informs targeted advertising, how automated accounts proliferate on digital media platforms, and how large-scale automated decisions can result in consumer harm are just the most prominent examples. As we move to tackle these urgent problems, we should be fully aware that we are addressing only one piece of a much larger puzzle. But it is a start.

Citations

- Ellen L. Weintraub, “Draft internet communications disclaimers NPRM,” Memorandum to the Commission Secretary, Federal Election Commission, Agenda Document No. 18-10-A, February 15, 2018.

- “Statement of Reasons of Chairman Lee. E. Goodman and Commissioners Caroline C. Hunter and Matthew S. Petersen, In the Matter of Checks and Balances for Economic Growth, Federal Election Commission, MUR 6729, October 24, 2014.

- Mike Isaac, “Russian Influence Reached 126 Million Through Facebook Alone”, New York Times, October 30, 2017.

- See, United States of America vs. Internet Research Agency, February 16, 2018.

- Kate Kaye, “Data-Driven Targeting Creates Huge 2016 Political Ad Shift: Broadcast TV Down 20%, Cable and Digital Way Up”, Ad Age, January 3, 2017.

- Lauren Johnson, “How 4 Agencies Are Using Artificial Intelligence as Part of the Creative Process”, Ad Week, March 22, 2017.

- “Honest Ads Act”

- Kent Walker, “Supporting election integrity through greater advertising transparency”, Google, May 4, 2018.

- “Hard Questions: What is Facebook Doing to Protect Election Security?”, Facebook, March 29, 2018.

- Bruce Falck, “New Transparency For Ads on Twitter”, Twitter, October 24, 2017.

- See the Facebook Ad Archive.

- See the Twitter Ad Transparency Center.

- Aaron Rieke and Miranda Bogen, “Leveling the Platform: Real Transparency for Paid Messages on Facebook”, Team Upturn, May 2018; Young Mie Kim, “Report: Closing the Digital Loopholes that Pave the Way for Foreign Interference in U.S. Elections”, Campaign Legal Center, April 16, 2018.

- Laws governing political ad transparency are often limited to ads that mention a candidate, a political party, or a specific race for an elected office. Yet a great many ads intended to influence voters mention none of these things, but they instead focus on a political issue that is clearly identified with one party or another. Hence, reform advocates have argued that the scope of political ad transparency must be extended to reach all ads that address a political issue rather than the narrower category of ads reference candidates or political parties.

- Sen. Amy Klobuchar, S. 1989: “Honest Ads”, U.S. Congress, October 19th, 2017

- “REG 2011-02 Internet Communication Disclaimers”

- See UK report, Conclusions, Paragraph 36

- Facebook’s political advertising policy was announced in May 2018. In addition to this catch-all category, it also includes specific reference to candidate ads and election-related ads.

- See, Facebook’s list of “national issues facing the public.”

- Many have proposed versions of this idea—but it was popularized by Tim Wu’s New York Times opinion piece,Tim Wu, “Please Prove You’re Not a Robot”, New York Times, July 15, 2017.

- Senator Hertzberg, Assembly Members Chu and Friedman, “SB-1001 Bots: Disclosure”, California Senate, June 26, 2018.

- Julia Powles, “New York City’s Bold, Flawed Attempt to Make Algorithms Accountable”, New Yorker, December 20, 2017; Big Data: A Report on Algorithmic Systems, Opportunity, and Civil Rights, Executive Office of the President, May 2016

- Dillon Reisman, Jason Schultz, Kate Crawford and Meredith Whittaker, “Algorithmic Impact Assessments: A Practical Framework for Public Agency Accountability”, AI Now, April 2018; Aaron Rieke, Miranda Bogen, David Robinson, and Martin Tisne, “Public Scrutiny of Automated Decisions,” Upturn and Omidyar Network, February 28, 2018.

- See, e.g. The National Artificial Intelligence Research and Development Strategic Plan, Networking and Information Technology Research and Development Subcommittee, National Science and Technology Council, October 2016; and “Broad Agency Announcement, Explainable Artificial Intelligence (XAI),” Defense Advanced Research Projects Agency, DARPA-BAA-16-53, August 10, 2016.

- European Commission, GDPR Articles: 4(4), 12, 22 , Official Journal of the European Union, April 27, 2017.

- See, e.g., Will Knight, “The Dark Secret at the Heart of AI,” MIT Tech Review, April 11, 2017.

- “Tenets,” The Partnership on AI.